- The Integrity Problem in Online Testing

- What Question Randomization Actually Means

- How Randomization Deters Cheating

- Randomization as a Fairness Tool, Not Just Security

- The Hidden Pitfalls: When Randomization Backfires

- Best-Practice Design for Fair, Cheat-Resistant Randomization

- How to Use OnlineExamMaker for Question Randomization

- Beyond Randomization: Building a Holistic Integrity Strategy

- Conclusion

The Integrity Problem in Online Testing

Online exams have become the norm. Whether you’re running corporate compliance training, a professional certification, or a university midterm, the shift to digital testing has opened up a Pandora’s box of integrity concerns. Students screenshot questions. Colleagues pass along answer keys in group chats. Test-takers sitting exams days apart share what they remember. The payoff for cheating has never felt so easy.

So what do assessment professionals actually do about it? One of the most effective—and surprisingly underused—defenses is question randomization. That means shuffling question orders, drawing different items from large question banks, and scrambling answer choices so that no two test forms look exactly alike. Simple in concept. Powerful in practice.

This article breaks down how question randomization works, why it matters for both security and fairness, where it can go wrong, and how tools like OnlineExamMaker make it easy to do right.

What Question Randomization Actually Means

Randomization is not just one thing. It’s a family of techniques, each targeting a different cheating vector:

- Question order shuffling: Every test-taker sees the same items, but in a different sequence. “What’s the answer to question 5?” becomes useless when everyone’s question 5 is different.

- Random selection from item banks: The platform draws a subset of questions from a larger pool, generating unique test forms. Two students may share only a handful of items—or none at all.

- Answer option shuffling: The correct answer isn’t always in position B. Each student’s answer choices appear in a randomized order, killing positional memorization cold.

Platforms like Canvas, Brightspace, TAO, and YouTestMe all support these configurations. In practice, a well-designed exam might pull 30 questions from a bank of 120, shuffled with randomized answer options—producing thousands of statistically distinct test forms from a single well-built quiz.

| Technique | What It Does | Cheating Vector It Disrupts |

|---|---|---|

| Question order shuffling | Reorders fixed set of items per test-taker | Answer sharing by question number |

| Random pool selection | Draws unique subset from a larger bank | Question harvesting between sittings |

| Answer option shuffling | Randomizes position of correct/incorrect options | Positional memorization (“always B”) |

How Randomization Deters Cheating

Let’s be honest: determined cheaters are creative. But question randomization quietly dismantles the most common schemes without requiring surveillance cameras or AI proctors.

Real-time answer sharing falls apart when question orders differ. If you and your neighbor are working through questions in completely different sequences, “number 7 is C” is actively misleading. The more answer options are also shuffled, the worse it gets for the would-be cheat.

Question harvesting—the practice of memorizing or photographing exam items to share with students taking the test later—is undermined by large item pools. When a platform pulls 25 questions from a bank of 100, a harvested set of 20 items provides patchy coverage at best. Pair that with periodic bank refresh and the scheme loses its ROI entirely.

Collusion patterns become harder to hide analytically. When unusual answer agreement appears between test-takers who received different forms, that’s a flag. Randomization doesn’t just deter cheating—it makes statistical detection of cheating more powerful.

Randomization as a Fairness Tool, Not Just Security

Here’s the part that often gets overlooked: randomization isn’t only about catching cheaters. Done well, it’s a fairness mechanism.

Research shows that item order affects performance under time pressure. Students who encounter harder questions early may spend longer on them, leaving less time for questions they’d otherwise nail. When question order is fixed, these effects are systematic—the same students always bear the cognitive cost of a tough opening block. Randomization distributes those effects across the population, so no single cohort is consistently disadvantaged by sequence.

Random selection from item banks also supports parallel test forms—different versions that cover the same content blueprint and difficulty profile. This prevents the scenario where Group A happens to get the easier Monday sitting while Group B faces a tougher Wednesday version.

When combined with stratified sampling—drawing items proportionally from each content area and difficulty tier—randomization actively promotes equity. Everyone gets a test that covers the same ground, at comparable challenge levels, regardless of when or where they sit.

The Hidden Pitfalls: When Randomization Backfires

None of this is magic. Naive randomization—simply toggling a “shuffle” switch without any design discipline—can introduce new unfairness even as it reduces cheating.

- Unequal difficulty across forms: If a pool contains items with wildly varying difficulty and you draw randomly, some students may get a harder batch by chance. Without pre-calibration, this is a real risk.

- Broken item types: Answer option shuffling is inappropriate for items with “all of the above” choices, position-sensitive stems, or complex formats like drag-and-drop ordering. Shuffling these can make the item unsolvable or introduce scoring errors.

- Speededness effects: In timed exams where unanswered items score as wrong, a difficult opening sequence can hurt slower—but equally competent—students disproportionately.

The lesson: randomization is a tool, not a silver bullet. It needs to be designed, not just switched on.

Best-Practice Design for Fair, Cheat-Resistant Randomization

Getting randomization right comes down to a few non-negotiable principles:

- Use stratified, blueprint-driven sampling. Don’t draw randomly from the whole bank. Draw proportionally from content sections and difficulty tiers. If your test should be 40% conceptual and 60% applied, your random draw should honor that split every time.

- Pre-calibrate your item bank. Before any question enters the pool, it should have a known (or estimated) difficulty level, typically from pilot data. Items that perform unexpectedly—too easy, too hard, or statistically odd—should be reviewed or retired.

- Maintain sufficient pool depth. A good rule of thumb: your bank should contain at least 3–4 times as many items as you draw per form. This limits item re-exposure across sittings and keeps harvesting difficult.

- Flag items incompatible with answer shuffling. Mark “all of the above,” ordered-list, and sequence-dependent items as non-shuffleable in your platform settings.

- Run post-exam analytics. Compare score distributions across forms. Monitor item statistics by position. If Form A is consistently outperforming Form B, your blueprint needs tightening.

How to Use OnlineExamMaker for Question Randomization

OnlineExamMaker is a cloud-based exam platform built for exactly this kind of thoughtful, scalable assessment design. Whether you’re an HR manager running a skills certification, a trainer delivering compliance exams, or a teacher managing a large course, it puts serious randomization power in an interface that doesn’t require a testing specialist to operate.

Here’s how to set up question randomization in OnlineExamMaker, step by step:

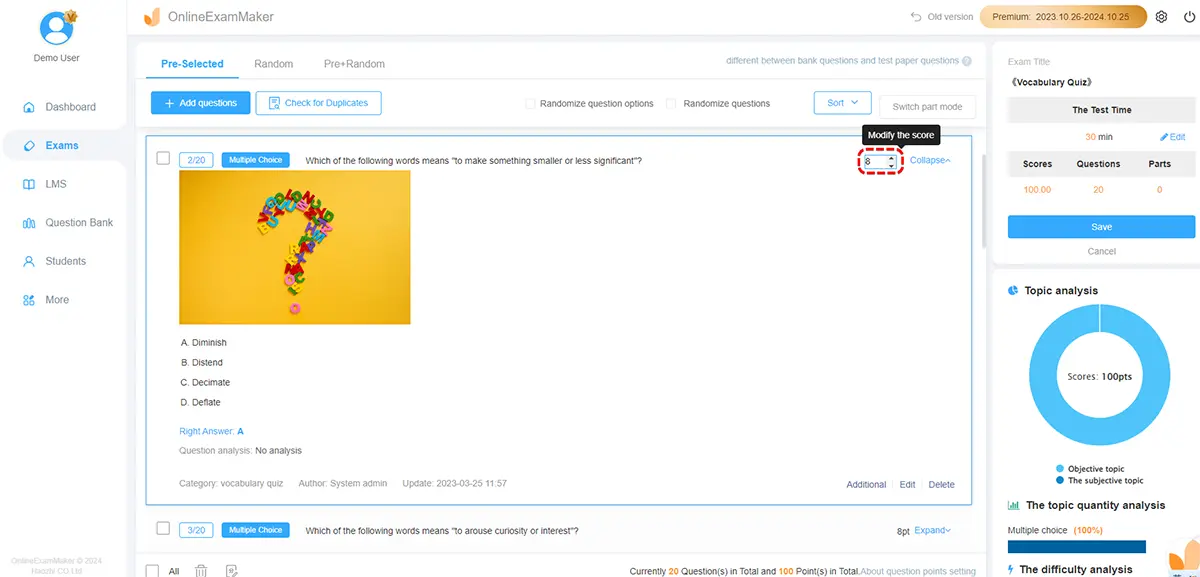

Step 1: Build Your Question Bank

Start by creating a robust question library. OnlineExamMaker supports multiple question types—multiple choice, true/false, fill-in-the-blank, matching, and more. You can import questions in bulk or use the built-in AI Question Generator to rapidly build a large, diverse item bank from your course materials. More items in the bank means stronger randomization and lower item exposure per test-taker.

Step 2: Organize Questions into Sections or Categories

Tag your questions by topic, cognitive level, and difficulty. This is the groundwork for stratified sampling. If your exam covers three modules, create separate question groups for each so the platform can draw proportionally from each section.

Step 3: Configure Randomization Settings

When creating a new exam in OnlineExamMaker:

- Select Random Questions to draw a specified number from each section’s pool.

- Enable Shuffle Question Order so that even test-takers who receive the same items see them in different sequences.

- Enable Shuffle Answer Options for applicable item types to eliminate positional memorization.

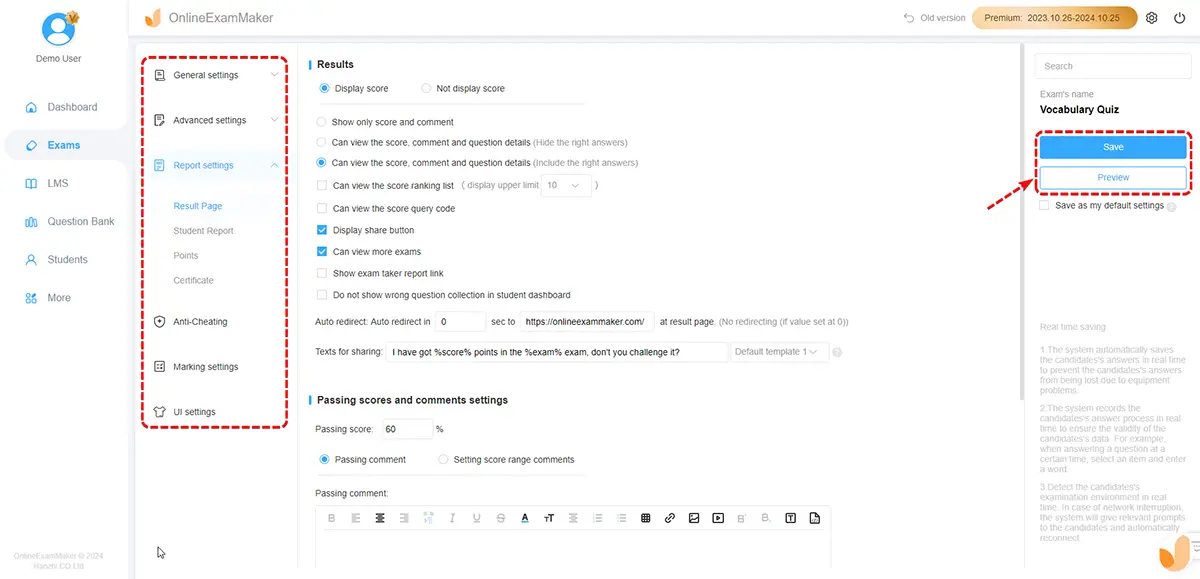

Step 4: Set Time Limits and Security Controls

Pair randomization with a time window that’s fair but not leisurely. OnlineExamMaker also offers AI Webcam Proctoring to flag suspicious behavior during the exam, and you can restrict access to results and feedback until after all sittings are complete.

Step 5: Review Results with Automatic Grading

Once the exam closes, Automatic Grading handles scoring instantly across all randomized forms. You can then review score distributions by form, flag any items with anomalous statistics, and refine your bank for the next cycle.

Create Your Next Quiz/Exam Using AI in OnlineExamMaker

Beyond Randomization: Building a Holistic Integrity Strategy

Randomization is a cornerstone, not the whole building. The most defensible assessment programs layer multiple controls:

- Secure browsers that prevent tab-switching, copy-pasting, or screen capture.

- Proctoring (AI-based or human) for high-stakes exams where the cost of cheating is serious.

- Tight time windows that limit the opportunity to consult resources or collaborators.

- Application-level question design—asking students to analyze, evaluate, or create rather than simply recall—raises the difficulty of cheating regardless of whether answers are shared.

- Post-exam feedback delays so that students finishing early can’t brief those sitting later.

The deeper point is cultural. Randomization reduces the payoff of cheating. Strong assessment design reduces the motivation. Transparent communication about why these measures exist—framing them as fairness tools for honest students, not punitive surveillance—builds the kind of trust that actually changes behavior.

Conclusion

Question randomization, at its best, is both a security measure and an ethical commitment. It says: every test-taker deserves an exam that reflects their own knowledge, not someone else’s preparation. That’s a principle worth designing for—carefully, deliberately, and with the right tools.

The platforms and research are clear: randomization works, but only when it’s implemented with attention to content coverage, difficulty balance, and item design. Toggle it on carelessly and you trade one form of unfairness for another. Do it well—with stratified blueprints, calibrated item banks, and smart post-exam analytics—and you have one of the most reliable integrity mechanisms available.

For teachers, trainers, HR managers, and enterprise assessors ready to take exam integrity seriously, OnlineExamMaker makes this level of sophistication genuinely accessible. Build your bank, configure your blueprint, turn on randomization, and let the platform handle the rest. Your honest test-takers will thank you—even if they never know why.